The attribution of the National Gallery’s Samson and Delilah (c. 1609–10) to Peter Paul Rubens has long been debated. When the painting resurfaced on the market in 1929, it was offered as a work by the Dutch Caravaggist Gerrit van Honthorst; the scholar Ludwig Burchard immediately declared it as a Rubens but questions have remained over the style and provenance of the work. Earlier this autumn, several media outlets reported that a Swiss company using artificial intelligence (AI) to assess the authenticity of artworks had calculated a 91.78 per cent probability that Samson and Delilah was not painted by Rubens. The same company also wrote a report on another painting in the National Gallery – A View of Het Steen in the Early Morning (c. 1636) – which stated a 98.76per cent probability that Rubens painted the work. (To our knowledge, the attribution of this painting has not been questioned.) This news, with its highly confident claims and oddly precise probabilities, surprised us as researchers who have been deeply involved in problems around art attribution for many years, specialising in developing AI technology and scientific methods for these questions. While the call for a deattribution of a significant painting at a major museum is ripe for publicity, without an in-depth publication of the AI methods employed in the peer-reviewed literature, it is difficult to take these particular conclusions at face value.

Is AI ready to tell us whether or not Samson and Delilah was painted by Rubens? To address this question, an AI researcher would first create ‘training sets’ that contain many examples of paintings by Rubens in one set, and many examples of works by other artists who might be confused with Rubens in another set. Through well-established algorithms that ‘teach’ the AI to accept paintings similar to the first set and reject paintings in the second set, the AI will learn to differentiate Rubens’s paintings from the others with (ideally) sufficient accuracy to answer attribution questions. However, this process has a number of pitfalls that must be properly understood if they are to be avoided.

One of the problems in identifying the work of Rubens and other Old Masters is their workshop practice. As a successful and sought-after painter, Rubens ran a large workshop where multiple assistants would sometimes work on a single painting. The challenge of definitively attributing such a painting based on an overall AI analysis nonetheless provides a wonderful opportunity to explore an interesting question: how can we identify the ‘hand of the master’ in contrast to the workshop assistants? Usefully, both the hands of the master and of the assistants are in the same painting, so there are none of the differences between paintings that would otherwise be additional factors to complicate the analysis.

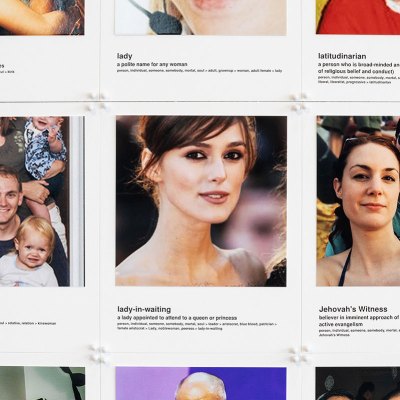

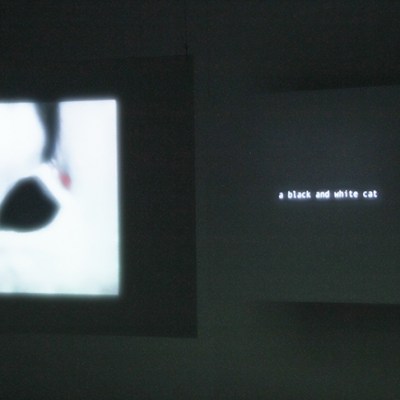

Many available pre-built AI methods designed for image recognition are built to analyse and categorise images as similarly to a human observer as possible. Common examples include software for facial recognition or image classification (to identify a cat versus a dog, for example). When an artwork has been misattributed – or worse, created to deceive – the images and features are of course similar to that of an authentic painting. An AI designed merely to mimic human judgement and (mis)interpretation will fall prey to the same mistakes that a forger relies upon. The AI will replicate the viewpoint of the connoisseur, but may not improve upon it. But forgers have created paintings to deceive human eyes and human judgement when viewed in visible light. Under different illumination such as infrared or ultraviolet light, or by using pigment identification, the forgery may become readily apparent. So we must further develop AI methods that focus on more subtle aspects of the work – for example, by characterising individual brush strokes.

Other issues can arise when AI methods key in on irrelevant details that would be promptly disregarded by an art historian. Images provided by museums have been captured with various equipment cameras and scanners. AI can easily detect the irrelevant features introduced from the equipment, such as image compression or lens distortion, and use them as ‘shortcuts’ instead of discriminating based upon the relevant features, which are more difficult to analyse, such as the brush strokes of the artist. An inappropriate AI method can reject the attribution of a work simply because its image was captured by a particular device or compressed using a particular technique. Two recent examples from the medical imaging field showed that deep learning models in pneumonia and Covid-19 could learn shortcuts based on spurious text on the hospital X-rays or other imaging artefacts instead of the medically relevant factors in the image. For these reasons, the AI must be trained on a set of images that have been collected in consistent and well-documented conditions.

By analysing a work stylistically, AI can complement traditional connoisseurship in an automated fashion and at scale. Nevertheless, machine learning methods should not supplant or hide the problems that bedevil connoisseurship behind an apparently uncontestable result from an AI. Recognising the current early development phase of AI, any conclusions must be drawn with an understanding of the limitations of the training set, and be contextualised and subjected to a ‘reality check’ by incorporating multiple approaches. Such a multi-pronged approach will add to the interpretative power of this tool and teach us more about the artists’ working methods and ultimately attribution. As Jennifer Mass, founder of Scientific Analysis of Fine Art (SAFA), notes, ‘the most robust attribution approach is always one in which a painting is examined with a range of methodologies using experts across multiple disciplines. The approach of a single person or analysis tool acting as a sole arbiter of attribution is outdated.’ We believe AI has great potential for questions of attribution, but only if it is done with sound methods. The AI method must be transparently validated and scientifically peer-reviewed so that collectors and researchers can understand both its capacity and limitations.

Ahmed Elgammal is director of the Art and AI Laboratory at Rutgers University, New Jersey. Adam Finnefrock is vice-president of Scientific Analysis of Fine Art, LLC.